The following article is an extended abstract submitted as part of AAAI’s New Faculty Highlights Program. Read the full-length article at this link: https://onlinelibrary.wiley.com/doi/10.1002/aaai.12068.

Search and Learning for Unsupervised Text Generation

Lili Mou

Department of Computing Science & Alberta Machine Intelligence Institute (Amii)

University of Alberta, Edmonton

Abstract

With the advances of deep learning techniques, text generation is attracting increasing interest in the artificial intelligence (AI) commu- nity, because of its wide applications and because it is an essential component of AI. Traditional text generation systems are trained in a supervised way, requiring massive labeled parallel corpora. In this paper, I will introduce our recent work on search and learning ap- proaches to unsupervised text generation, where a heuristic objective function estimates the quality of a candidate sentence, and discrete search algorithms generate a sentence by maximizing the search objective. A machine learning model further learns from the search results to smooth out noise and improve efficiency. Our approach is important to the industry for building minimal viable products for a new task; it also has high social impacts for saving human annotation labor and for processing low-resource languages.

Text generation is a fundamental and long-lasting problem in natural language processing (NLP) and artificial intelli- gence (AI), with wide applications such as conversational agents, news headline generation, and grammatical error correction. Early text generation systems are mainly based on rules and templates; thus, the generated text lacks flexibility and the applica- tions are restricted to certain narrow domains, for example, report generation (Kukich 1983) and weather forecasting (Goldberg, Driedger, and Kittredge 1994).

In recent years, deep learning has achieved remarkable progress in various text generation tasks (Sutskever, Vinyals, and Le 2014; Vaswani et al. 2017). Due to the high modeling capacity, deep neural networks are able to capture the complexity of languages, and generate more diverse and natural texts than rule-based systems. However, neural models are known to be data- hungry, usually requiring massive labeled input–output pairs, known as parallel corpora. For example, the widely used corpus provided by the 2014 Workshop of Machine Translation contains more than 4 million pairs of English–German sentences (Bojar et al. 2014). This is prohibitively expensive for deep learning models being applied to new domains, new tasks, and low-resource languages.

In this paper, I will introduce our recent progress on unsupervised text generation, which does not require parallel data for training. We formulate the text generation task as a search problem, where we define a heuristic objective function, typically involving language fluency, semantic coherency, and other task-specific constraints. Then, we perform discrete search, for example, simulated annealing (Liu et al. 2020), to generate the output sentence by maximizing the search objective. Further, a machine learning model may learn from the search results to smooth out search noise and improve inference efficiency. In this way, our approach is unsupervised, yet achieving high performance in a variety of tasks.

Social good

Unsupervised text generation can benefit our society in various aspects.

First, unsupervised models are important to the AI industry, which typically requires minimal viable products before investing substantial resources, including funding and human labor. Therefore, our unsupervised techniques are particularly suitable for new tasks and startup companies, where massive annotated data are either unavailable or not affordable.

Second, unsupervised text generation will help low-resource language processing. Training supervised deep learning NLP models requires massive labeled corpora, which only exist for several most-spoken languages. This prohibits the applications of deep learning to low-resource languages. Our approaches, instead, do not require labeled data, and can be potentially applicable to different languages.

Last but not least, our unsupervised techniques save human labor for data annotation. In machine learning, massive labeled data are often annotated by mechanical Turks, and this could be expensive and time-consuming; asking annotators to write sentences for text generation tasks is especially cumbersome and often yields poor-quality corpora. Our unsupervised text generation largely reduces human labor, and may provide an initial draft for mechanical Turks to edit.

Methodology

We tackle unsupervised text generation by search approaches. Since we do not have parallel data for training, we heuristically define a scoring function that roughly estimates the quality of a candidate output sentence given some input in a certain task. Such a heuristic function is designed by decomposing different task requirements, including language fluency modeled by a language model (Radford et al. 2019), semantic coherence by the cosine similarity of vector feature representations (Pagliardini, Gupta, and Jaggi 2018), and other task-specific constraints. For example, paraphrasing requires that the generated sentence should be different from the input (Liu et al. 2020), whereas summarization requires the text should be shorter (Schumann et al. 2020).

Then, we perform stochastic local search to maximize the objective function for unsupervised text generation. Specifically, we iteratively perform local edits of the sentence, including word insertion, deletion, and replacement. If the new candidate sentence has a higher score, then we will accept the edit and move on to the next iteration, or otherwise, we tend to reject the edit; this is known as hill-climbing search (Schumann et al. 2020). Alternatively, we may reserve a small probability for accepting a worse sentence, so that our algorithm is less greedy and can better explore the search space. Over the search iteration, we will make our algorithm more greedy for better convergence, known as simulated annealing (Liu et al. 2020).

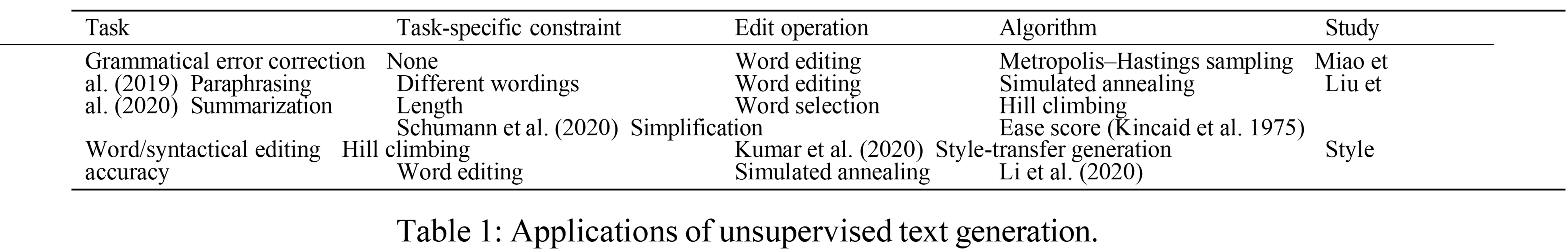

Our search space and edit operations may be specifically designed based on the task of interest. For summarization (Schu- mann et al. 2020), we perform word-level extraction, where the search space is the selection of words from the input sentence, instead of from the entire vocabulary. For text simplification (Kumar et al. 2020), we design syntax-based editing, which ma- nipulate the parse tree of a sentence. Table 1 summarizes the applications and the approaches we have explored in our recent studies.

Moreover, we propose to train a machine learning model from the search results (Li et al. 2020). This can not only smooth out the search noise (Jolly, Dengel, and Mou 2021), but also very largely improve the efficiency when our algorithm is de- ployed (Liu, Huang, and Mou 2022; Liu, Zhang, and Mou 2022). In general, our approach generates high-quality text for a variety of tasks, and the performance is close to supervised methods in the tasks of paraphrasing (Li et al. 2020) and text simplification (Kumar et al. 2020).

Future directions

The research presented in this paper points to several important future directions. One fundamental research question is how to perform efficient search in the sentence space, which can be inspired by the reinforcement learning community, for example, using Monte Carlo tree search (Coulom 2006). On the other hand, our search and learning approaches are a generic frame- work and may be applicable to various tasks and domains, such as few-shot text generation (Jolly, Dengel, and Mou 2021), controllable text generation (Dong et al. 2021), and molecule generation (Liu et al. 2021).

Acknowledgments

The work was partially supported by the Natural Sciences and Engineering Research Council of Canada (NSERC) under grant No. RGPIN-2020-04465, the Alberta Machine Intelligence Institute (Amii) Fellow Program, the Canada CIFAR AI Chair Program, Compute Canada (www.computecanada.ca), a UAHJIC project, and a donation from DeepMind.

Biographical statement

Dr. Lili Mou is an Assistant Professor at the Department of Computing Science, University of Alberta. He is also an Alberta Machine Intelligence Institute (Amii) Fellow and a Canada CIFAR AI (CCAI) Chair. Lili received his BS and PhD degrees in 2012 and 2017, respectively, from School of EECS, Peking University. After that, he worked as a postdoctoral fellow at the University of Waterloo. His research interest is mainly in machine learning methods for natural language processing. He has more than 50 publications at top conferences and journals, and has presented conference tutorials at EMNLP-IJCNLP’19 and ACL’20. He received a New Faculty Highlight Award in the AAAI’21 conference.

Conflict of interest

The author has no conflicts of interest to report.

References

Bojar, O.; Buck, C.; Federmann, C.; Haddow, B.; Koehn, P.; Leveling, J.; Monz, C.; Pecina, P.; Post, M.; Saint-Amand, H.; Sori- cut, R.; Specia, L.; and Tamchyna, A. 2014. Findings of the 2014 workshop on statistical machine translation. In Proceedings of the Ninth Workshop on Statistical Machine Translation, 12–58.

Coulom, R. 2006. Efficient selectivity and backup operators in Monte-Carlo tree search. In International Conference on Computers and Games, 72–83.

Dong, C.; Huang, C.; Za¨ıane, O.; and Mou, L. 2021. Simulated annealing for emotional dialogue systems. In Proceedings of the 30th ACM International Conference on Information and Knowledge Management, 2984–2988.

Goldberg, E.; Driedger, N.; and Kittredge, R. 1994. Using natural-language processing to produce weather forecasts. IEEE Expert 9(2):45–53.

Jolly, S.; Dengel, A.; and Mou, L. 2021. Search and learn: Improving semantic coverage for data-to-text generation. In

Proceedings of the AAAI Conference on Artificial Intelligence, 10858–10866.

Kincaid, J. P.; Fishburne Jr, R. P.; Rogers, R. L.; and Chissom, B. S. 1975. Derivation of new readability formulas (automated readability index, fog count and flesch reading ease formula) for navy enlisted personnel. Technical report, Naval Technical Training Command Millington TN Research Branch.

Kukich, K. 1983. Design of a knowledge-based report generator. In Proceedings of the 21st Annual Meeting of the Association for Computational Linguistics, 145–150.

Kumar, D.; Mou, L.; Golab, L.; and Vechtomova, O. 2020. Iterative edit-based unsupervised sentence simplification. In

Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, 7918–7928.

Li, J.; Li, Z.; Mou, L.; Jiang, X.; Lyu, M.; and King, I. 2020. Unsupervised text generation by learning from search. In

Advances in Neural Information Processing Systems, 10820–10831.

Liu, X.; Mou, L.; Meng, F.; Zhou, H.; Zhou, J.; and Song, S. 2020. Unsupervised paraphrasing by simulated annealing. In

Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, 302–312.

Liu, X.; Li, P.; Meng, F.; Zhou, H.; Zhong, H.; Zhou, J.; Mou, L.; and Song, S. 2021. Simulated annealing for optimization of graphs and sequences. Neurocomputing 465:310–324.

Liu, P.; Huang, C.; and Mou, L. 2022. Learning non-autoregressive models from search for unsupervised sentence summariza- tion. In Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics, 7916–7929.

Liu, P.; Zhang, X.; and Mou, L. 2022. A character-level length-control algorithm for non-autoregressive sentence summariza- tion. arXiv preprint arXiv:2205.14522.

Miao, N.; Zhou, H.; Mou, L.; Yan, R.; and Li, L. 2019. CGMH: Constrained sentence generation by metropolis-hastings sampling. In Proceedings of the AAAI Conference on Artificial Intelligence, 6834–6842.

Pagliardini, M.; Gupta, P.; and Jaggi, M. 2018. Unsupervised learning of sentence embeddings using compositional n-gram fea- tures. In Proceedings of the 2018 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 528–540.

Radford, A.; Wu, J.; Child, R.; Luan, D.; Amodei, D.; Sutskever, I.; et al. 2019. Language models are unsupervised multitask learners. OpenAI Blog.

Schumann, R.; Mou, L.; Lu, Y.; Vechtomova, O.; and Markert, K. 2020. Discrete optimization for unsupervised sentence summarization with word-level extraction. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, 5032–5042.

Sutskever, I.; Vinyals, O.; and Le, Q. V. 2014. Sequence to sequence learning with neural networks. In Advances in Neural Information Processing Systems, 3104–3112.

Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A. N.; Kaiser, Ł.; and Polosukhin, I. 2017. Attention is all you need. In Advances in Neural Information Processing Systems, 5998–6008.